The case for autonomous underwriting

In larger commercial insurance lines, where the complexity lives, every submission is data heavy and uniquely structured. Most of what's being called “AI” today is some form of document extraction, email routing, or pre-filled forms. It's faster decisions on the same workflows that existed five years ago. These are real improvements, but they are not transformative.

What's being built is automation: a faster version of the same system. The human stays in the same seat. The workflow stays the same. The constraint stays the same. Headcount still determines how much business you can write.

Autonomy is a different bet.

Automation vs. autonomy

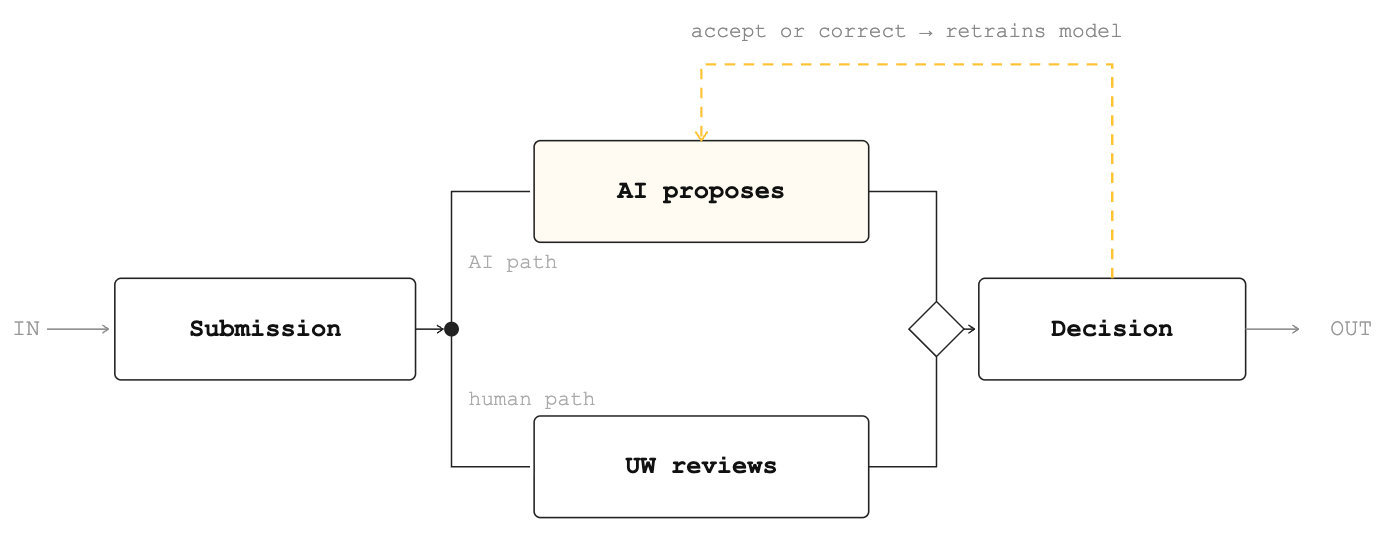

Automation asks: how do we make this faster? Autonomy asks: what authority has the system earned?

The distinction matters because these two paths produce entirely different architectures, entirely different data strategies, and entirely different outcomes over time. Automation optimizes the existing process. Autonomy replaces it, not all at once, but gradually, as the system proves it deserves more authority. The human role doesn't disappear, it evolves. The underwriter goes from doing the work to reviewing it, from reviewing it to judging it, and eventually to architecting the portfolio while the system handles execution.

A word for the skeptics

There is a version of this argument that makes capacity providers nervous. We've heard it, and the concern is legitimate enough to name directly.

If AI is making underwriting decisions, who is accountable when the loss ratio moves? If the human is no longer in the loop, what happens to the judgment that separates good risk from bad? Autonomy sounds like a way to write more business faster, and in insurance, volume without discipline is how you blow up a portfolio.

We are not making an argument for speed over judgment. We are making an argument that judgment, when it is consistent, data-driven, and free from the cognitive biases of manual workflows, produces better outcomes. Not worse.

The honest problem with human underwriting at scale is not that humans are bad underwriters. It's that humans are inconsistent ones. The same risk evaluated on a Friday afternoon gets a different answer than on a Tuesday morning. Appetite drifts. New hires bring old habits with them. Guidelines get interpreted differently across a team. Institutional knowledge lives in people, not systems, and walks out the door when they leave.

Autonomy doesn't remove judgment from underwriting. It encodes it. Every guardrail, every confidence threshold, every escalation path is a formalization of what good underwriting looks like, built on thousands of real decisions, tested against real outcomes, and applied consistently across every submission.

The result is actually more disciplined underwriting. A portfolio underwritten autonomously within a well-defined domain, with clear escalation criteria and continuous outcome monitoring, will outperform one where quality depends on who happened to be in the office that day.

Capacity providers trust us not because we have good intentions. They trust us because we are building the evidence that proves it, one graduation level at a time.

The incumbents are solving the wrong problem

Some traditional carriers and MGUs are investing in AI by purchasing software off the shelf. The dominant playbook is sensible: find where humans spend time and use AI to speed it up. This preserves the existing org. It doesn't threaten headcount, it assists it.

And that's exactly the problem.

Autonomy requires a different architecture. It requires a system that treats every human correction as a training signal, every accepted recommendation as an earned mile, every outcome as ground truth. You can't bolt that on. It's something you build from the start. And incumbents won't make that shift, not because they lack the resources, but because the incentive to preserve the existing model is too strong.

The gap between automation and autonomy is where this industry gets rebuilt.

The Foundation

We didn't start with agents at Shepherd. We had to start with making the data usable by AI.

Most commercial insurance platforms are built around human interfaces. Forms that humans fill, workflows that humans drive, systems that wait for input before doing anything. We built something different. Over the last four years, we've built infrastructure that automatically parses exposure data and loss history from submission documents, classifies risk, and structures information that previously lived in PDFs and email threads. It's the unglamorous work. It's the work most companies skip because it doesn't ship as a feature.

That decision changed who we hired. Most MGUs at our stage would have a team of underwriting assistants whose primary job is data entry. We don't have that role. We eliminated it by building systems that do it better. Our underwriters don't process paperwork. They make decisions.

It's a different theory of the business. While competitors are building AI tools on top of teams structured for manual work, we built the team around the assumption that the manual work should go away. And it has.

The results speak for themselves. We've issued more than 1,500 policies covering over $400 billion in insured project value for 600+ customers. The agents we're now deploying sit on top of four years of structured data, parsed decisions, and institutional knowledge that no competitor has. You cannot build autonomous systems on unstructured data.

Vertical training data is the moat

General-purpose AI is remarkable. But in high-stakes, highly-regulated, professional domains, it hits its limits.

The reasoning required to underwrite a $200k GL policy for a mid-market contractor isn't in any foundation model's pretraining data. It lives in the decisions made by underwriters across thousands of accounts: the accepts, the edits, the exceptions, the declinations. That knowledge is encoded nowhere except in the behavior of the people who made those calls.

This is why vertical training data compounds in a way that horizontal tools cannot replicate. Every accepted AI recommendation is a labeled example. Every correction is a signal. Every outcome, whether bound, lost, or reflected in the loss ratio, is ground truth. The loop tightens with every submission. The same account parsed today produces better results than the same account parsed last quarter.

Four years of structured decisions from thousands of policies is infrastructure, and it widens with every interaction.

Authority is earned, not declared

Waymo didn't reach autonomous driving by announcing it. They drove millions of miles, measured interventions per mile, and graduated only when the data proved the system was ready.

The same logic applies to underwriting. You don't deploy autonomy. You accumulate evidence for it. Every level of authority requires measurable thresholds: acceptance rates, correction rates, accuracy sustained over volume and time. The system acts when it has earned the right to act. When it hasn't, it stops and asks for help.

At Shepherd, we measure progress the way Waymo measures miles: Accepted AI Recommendations. Every time an agent proposes a value and an underwriter accepts it, that's one mile. The path from assisted underwriting to fully autonomous underwriting is the story of that metric scaling, and the human correction rate approaching zero.

The future of commercial insurance is autonomous by nature. Underwriting has shifted from human driven to systems of authority that are underpinned by proprietary datasets about the customers and markets they serve. The companies that earn autonomy will look nothing like the ones that came before.

Agentic underwriting is the future of commercial insurance, driving an evolution from human-driven process to full autonomy. The carriers that earn that autonomy, through domain-specific AI, smart use of proprietary data, and deep encoding of their own organizational knowledge, will look nothing like the ones that came before.